Flowforest Intelligence Technology

Flowforest Intelligence TechnologySRAM-CIM Computing

The Last Mile of Edge AI

Flowforest builds the FFI8805 plug-and-play Edge AI platform on SRAM-CIM core architecture,

achieving ultimate efficiency with mature process to unlock mass deployment of Edge AI

Breaking Through the Memory Wall

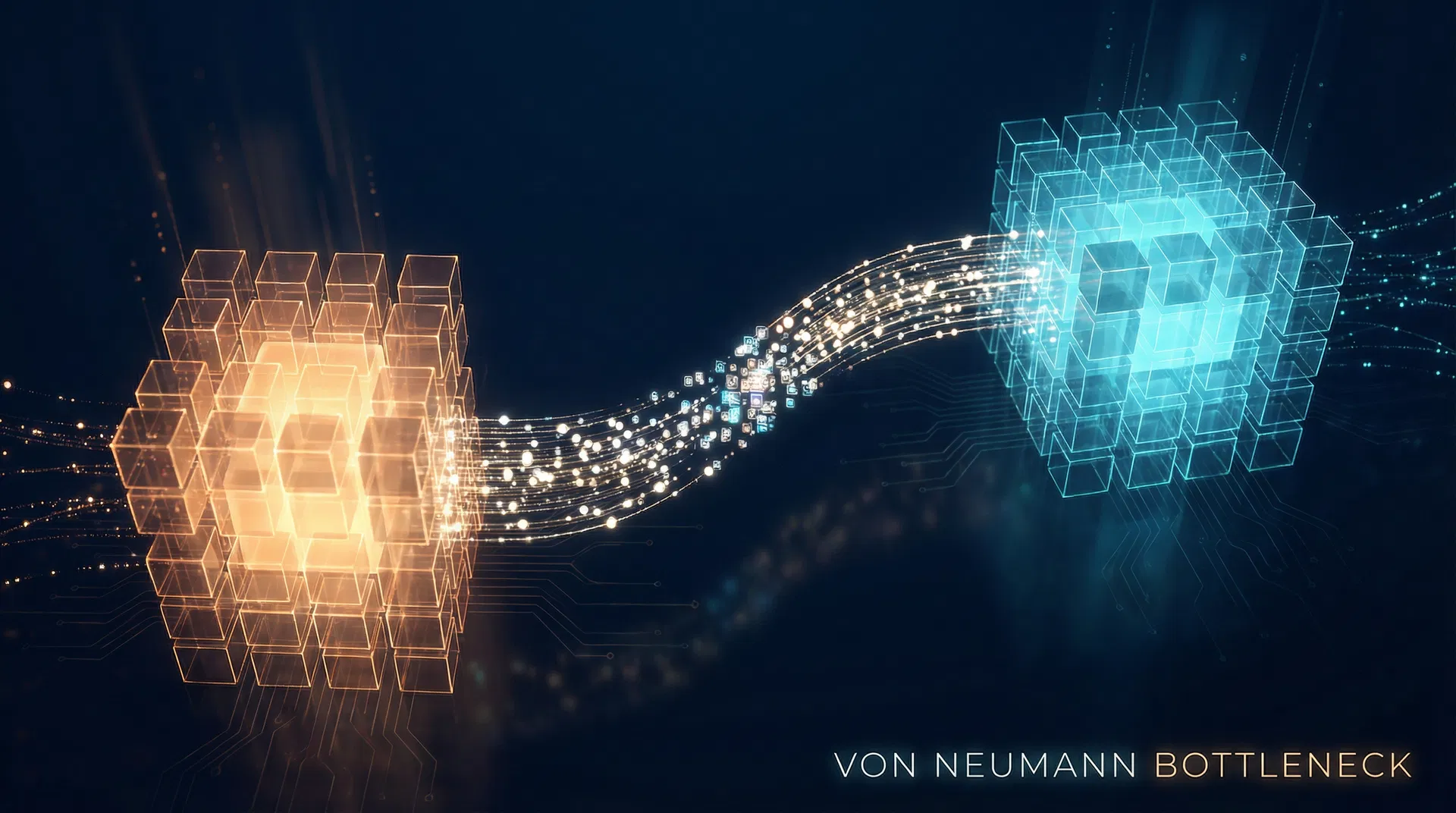

The traditional von Neumann architecture separates the processor from memory, requiring frequent data transfers between them, creating the so-called "memory wall" bottleneck. As AI model sizes have grown explosively, the latency and power consumption of data movement have become the key constraints limiting AI computing power.

To address this, Computing In Memory (CIM) technology emerged. Its core concept is to perform computations directly within memory cells, deeply integrating data storage and logic operations at the physical level, fundamentally eliminating the overhead of data shuttling.

Core Concept: Let computation happen where data resides, rather than moving data to the processor — this is a redefinition of the "essence of computing."

Von Neumann bottleneck: The data channel between processor (warm) and memory (cool) becomes the performance bottleneck

Von Neumann vs CIM Architecture

Click to toggle and compare the data processing flow differences

Data must be shuttled between processor and memory, causing latency and power bottlenecks

CIM Technology Timeline

Von Neumann Architecture Born

The classic computing paradigm separating processor and memory was established, laying the foundation for modern computers.

Memory Wall Emerges

AI model sizes surged rapidly; data movement latency and power consumption became critical bottlenecks.

CIM Technology Rises

CIM concepts moved from academia to industry, with multiple companies investing in SRAM-based CIM chips.

Commercialization Accelerates

Vendor-E Flagship SoC adopted CIM architecture; Vendor-A invested $20B to license Groq LPU technology.

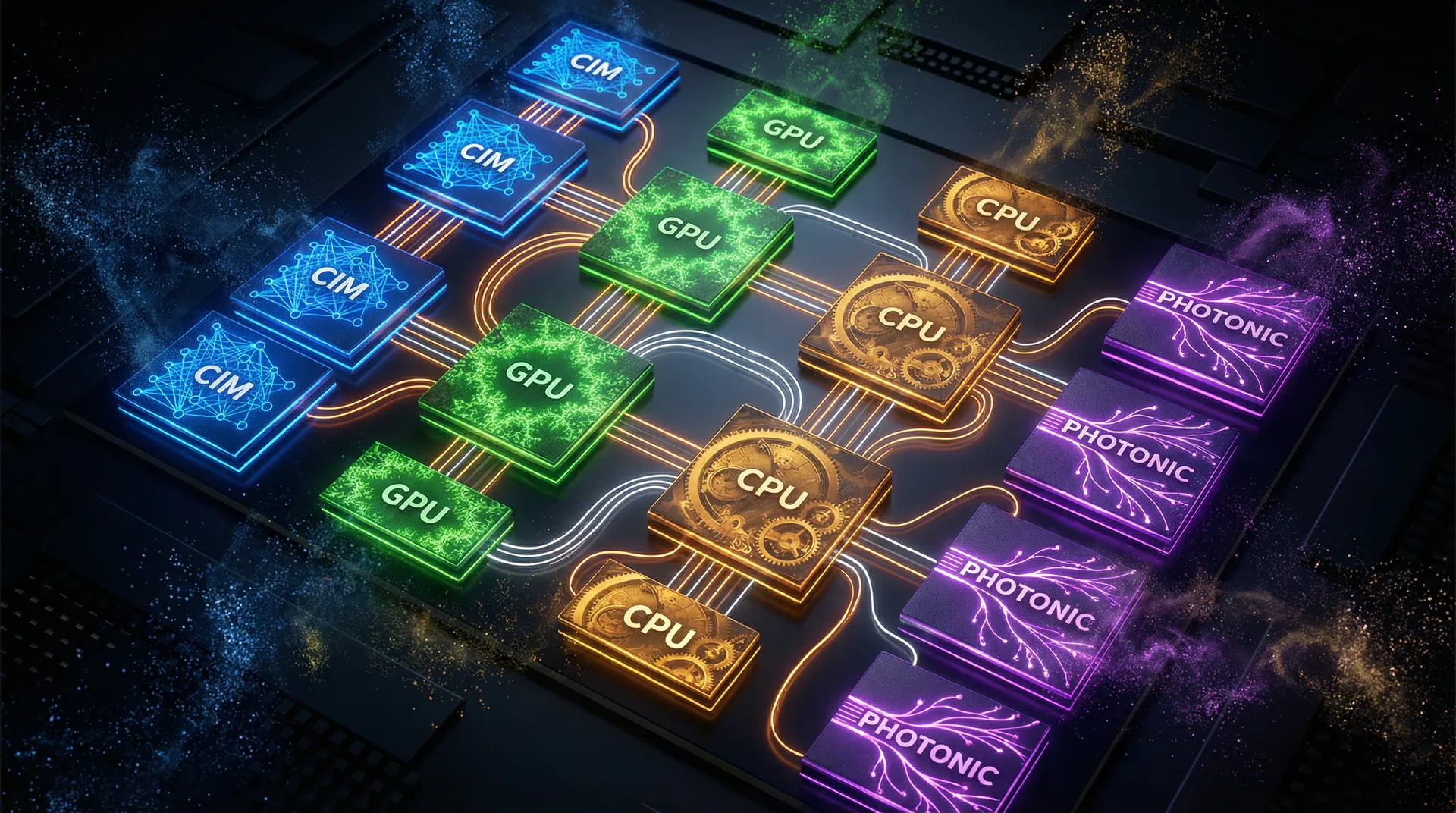

Multi-Architecture Era

CIM mass production penetration expected to exceed 35%; AI chips enter the new era of LPU, GPU, TPU coexistence.

Empowering Edge AI

FFI8805 leverages SRAM-CIM in-memory computing architecture to achieve efficient AI inference at under 1W power. It provides standardized plug-and-play modules that reduce development cycles from 12 weeks to just 4 weeks.

FFI8805 empowers edge AI devices: IPC cameras, industrial controllers, smart home, wearables

FFI8805 Mini

Lightweight vision & voice recognition module

FFI8805 Pro

SLM & hierarchical inference module

AI Chip Market Trends & Forecast

2020–2030 Global AI Chip Market Size & CIM Penetration Estimate

- Market Size (Billion USD)

- CIM Penetration (%)

Sources: Compiled from Gartner, IDC, TrendForce and other research estimates

Members Only

CIM vs NPU vs GPU comparison charts and quantitative specification benchmarks are exclusive content for registered members. Log in to unlock full analysis.

Log In to UnlockMembers Only

The Benchmark Simulator is an exclusive tool for registered members. Log in to unlock full inference latency, power consumption, and deployment cost simulation.

Log In to UnlockThree Pain Points of Edge AI Deployment

Mass deployment of Edge AI faces three major pain points: difficult integration (inconsistent HW/SW specs, months-long integration cycles), high cloud costs (poor real-time performance with privacy concerns), and orphaned devices (scattered deployment lacking OTA and remote diagnostics). According to Gartner, 85% of Edge AI projects fail to scale due to lack of effective lifecycle management.

Difficult Integration

Inconsistent HW/SW specs like a kit car. Integration cycles of months severely delay time-to-market.

High Cloud Costs

Full cloud inference is expensive with poor real-time performance and privacy concerns, preventing mass commercialization.

Orphaned Devices

Devices scattered everywhere, lacking OTA and remote diagnostics, with extremely high maintenance costs.

CIM architecture: Data flows within memory arrays without external movement

FFI8805 Solutions

Business Model & Ecosystem

FFI adopts a four-pillar business model: Hardware + SaaS Subscription + Certification Ecosystem + IP Licensing.

Hardware Modules

Standardized FFI8805 modules

SaaS Subscription

SDK toolkit + Cloud platform

Certification Ecosystem

FFI-Ready compatibility

IP Licensing

Core architecture licensing

Hardware Modules

Standardized FFI8805 modules

SaaS Subscription

SDK toolkit + Cloud platform

Certification Ecosystem

FFI-Ready compatibility

IP Licensing

Core architecture licensing

Hardware Modules

Standardized FFI8805 modules

SaaS Subscription

SDK toolkit + Cloud platform

Certification Ecosystem

FFI-Ready compatibility

IP Licensing

Core architecture licensing

FFI ecosystem: Hardware modules, SDK tools, SaaS platform, and certification system working together

Module Sales (Hardware)

Standardized FFI8805 modules

Software Subscription (SaaS)

SDK development toolkit and cloud operations platform subscription

FFI-Ready Certification

Hardware compatibility testing fees and annual brand licensing fees

Flywheel Effect: The FFI platform drives ecosystem growth through a positive flywheel: More partners → More deployments → Higher recurring revenue → Stronger ecosystem moat. The certification system and proprietary toolchain increase switching costs, enhancing long-term customer stickiness.

Technology Partners

Partnering with global semiconductor leaders to advance the CIM computing-in-memory ecosystem

Tech Selection Guide

Answer a few questions and we'll recommend the best CIM product for your needs

What is your primary application scenario?

Conclusion

FFI8805 platform adopts an 'asymmetric competition' strategy—not competing on raw TOPS, but focusing on performance per watt (Perf/Watt), leveraging mature process + SRAM-CIM architecture for ultimate cost-performance.

Targeting fragmented markets: Locking onto IPC, industrial controllers, and traditional gateway upgrade demands, providing Turnkey Solutions and standard modules to upgrade Dumb Devices to AI Devices via USB/M.2. Defining the 'USB of Edge AI' through standardized interfaces, building user migration costs through cloud operations (OTA) and pre-trained model libraries.

FFI8805 is not just a chip supplier, but a key standard setter for Edge AI infrastructure. Through Open CIM Alliance (OCA) consensus and co-defining standards with IP-Vendor-A, forming a platform-level ecosystem by solving fragmentation problems that major players cannot address, coexisting with giants to form a platform.

| Dimension | FFI8805 (OCA) | Traditional AI Accelerators | FFI Advantage |

|---|---|---|---|

| Core Architecture | SRAM-CIM In-Memory Computing | Traditional GPU/NPU Architecture | Ultimate Perf/Watt |

| Integration | Plug-and-Play Standard Modules | Custom dev, long cycles | 4-week vs 12+ weeks |

| Operations | SaaS Cloud OTA + Remote Diagnostics | No unified ops platform | 60% ops cost reduction |

| Ecosystem | OCA Alliance + FFI-Ready Certification | Closed ecosystem, lock-in | Open standards lower barriers |

Latest News

Industry Deployment Highlights

Explore how FFI8805 CIM-First architecture delivers measurable ROI across industries