NEXT-GEN PRODUCT

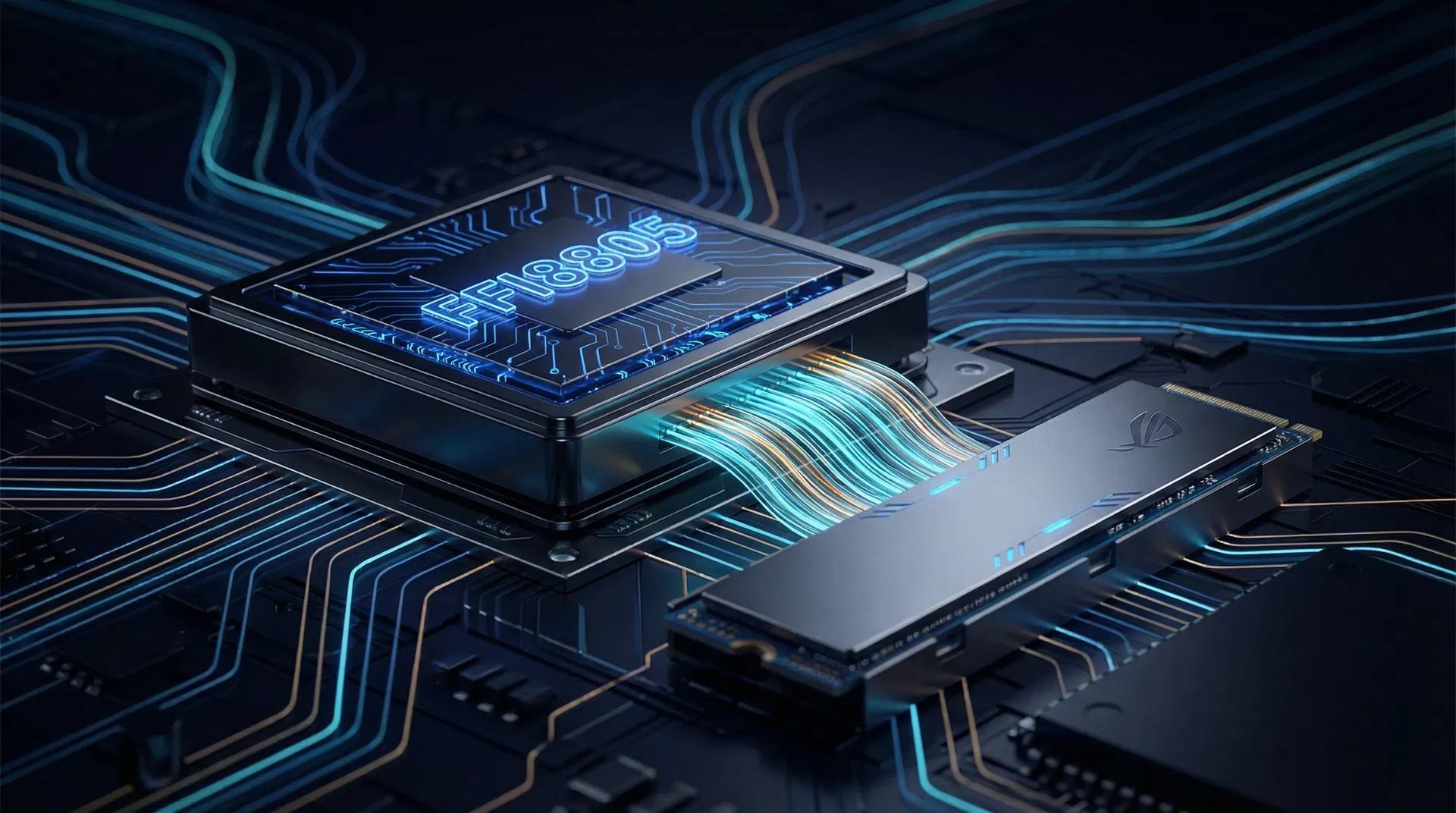

NEXT-GEN PRODUCTFFI8805 Premium

CIM AI Accelerator × SSD Storage — Complete LLM Solution

FFI8805 Premium integrates a CIM AI acceleration core with an AI-aware SSD controller, featuring DeepSeek V4 Engram persistent memory engine and DualPath dual-channel bandwidth optimization for comprehensive LLM inference hardware.

Three Bottlenecks of LLM Inference

As models like DeepSeek V4 surpass trillion parameters, traditional GPU + DRAM architectures face simultaneous bottlenecks in memory capacity, storage bandwidth, and operational costs.

Memory Wall

A 671B parameter model requires 1.2TB+ memory. Single-node GPU HBM is far insufficient, and KV-Cache grows linearly with context length.

Storage Bandwidth

Prefill stage requires loading hundreds of GB of model weights from SSD. Traditional single-path PCIe bandwidth becomes the primary inference latency bottleneck.

Operational Cost

Power and cooling costs for large GPU clusters continue to rise, making per-token inference cost difficult to reduce to commercially viable levels.

Three Technology Pillars

FFI8805 Premium combines three breakthrough technologies to optimize LLM inference across model memory, data paths, and storage media.

DeepSeek V4 Engram Persistent Memory

Engram is DeepSeek V4's native persistent memory mechanism that compresses high-frequency knowledge into O(1) queryable structured memory, replacing KV-Cache's linear growth. Combined with MLA v2 and FP8 mixed-precision training on 14.8T tokens.

V4 vs V3 Benchmark Gains

DualPath Bandwidth Optimization

DualPath leverages idle DE (Data Engine) node SNICs in AI training clusters, creating a second data path: SSD→DE DRAM→CNIC RDMA→GPU. During Prefill, dual-path parallel reads break through single-path PCIe bandwidth limits.

AI-Aware SSD NAND IP Architecture

A 5-layer AI-aware architecture redesigned from NAND array to acceleration layer, enabling the SSD controller to understand AI workload access patterns for intelligent prefetching, dynamic QoS, and near-storage computing.

Hardware Specifications

FFI8805 Premium integrates CIM AI acceleration core, SSD controller, and NAND array in a single 2.5" U.2 module. Below are complete specifications for each subsystem.

FFI8805 Premium Specifications

| Component | Specification | Performance |

|---|---|---|

Members-Only Technical Docs

Detailed specifications, memory hierarchy, technical comparisons, application scenarios, and product roadmap are available exclusively to logged-in members.